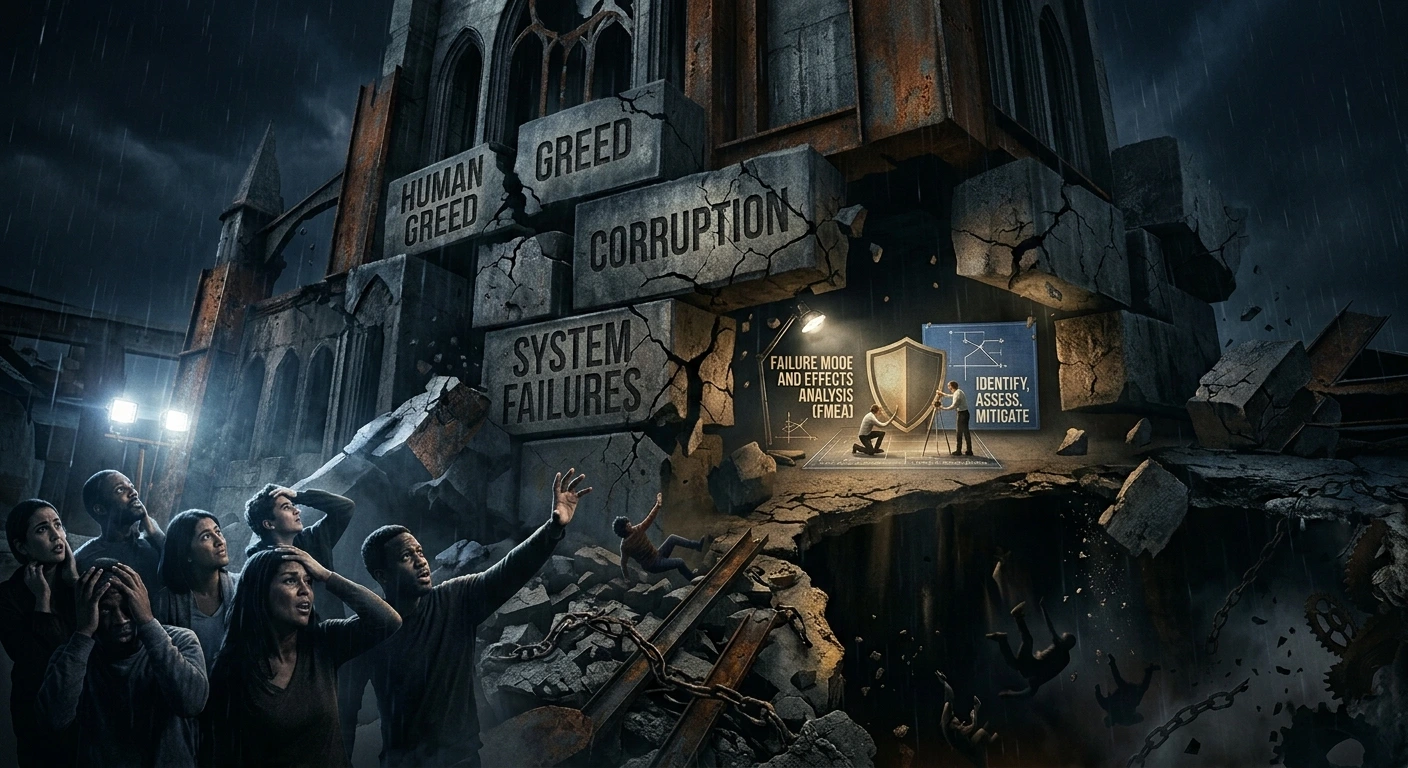

The Most Dangerous Failures Rarely Begin as Technical Problems

When major failures occur in industries, businesses, infrastructure, healthcare systems, or financial institutions, the visible breakdown is often only the final symptom.

The actual failure usually begins much earlier.

Not with a machine breakdown.

Not with a system collapse.

Not with a disaster headline.

But with:

- ignored warnings,

- hidden assumptions,

- normalized deviations,

- weak accountability,

- manipulated reporting,

- fear-driven silence,

- and short-term decision-making.

Many catastrophic failures are not engineering failures first. They are governance and behavioural failures that later manifest technically.

This is where Failure Mode and Effects Analysis (FMEA) thinking becomes far more important than most organizations realize.

How Human Behaviour Creates System Failures

In theory, organizations operate through processes, controls, standards, and audits.

In reality, systems are driven by people.

And people are influenced by:

- incentives,

- pressure,

- fear,

- ambition,

- politics,

- ego,

- financial targets,

- and organizational culture.

When these factors become misaligned, risks begin to grow silently.

Common Human-Driven Failure Mechanisms

1. Short-Term Cost Thinking

Organizations may compromise maintenance, testing, training, or quality controls to reduce short-term expenses.

Initially, this appears financially beneficial.

Over time, hidden reliability degradation accumulates.

2. Fear-Driven Reporting Culture

Employees may hesitate to escalate risks because:

- leadership discourages bad news,

- blame culture exists,

- transparency is punished,

- or performance metrics dominate reality.

As a result, weak signals remain buried until failures become severe.

3. Normalization of Deviations

One temporary workaround becomes routine practice.

Minor non-conformities gradually become “acceptable.”

Eventually, the organization loses the ability to distinguish between controlled operations and unsafe conditions.

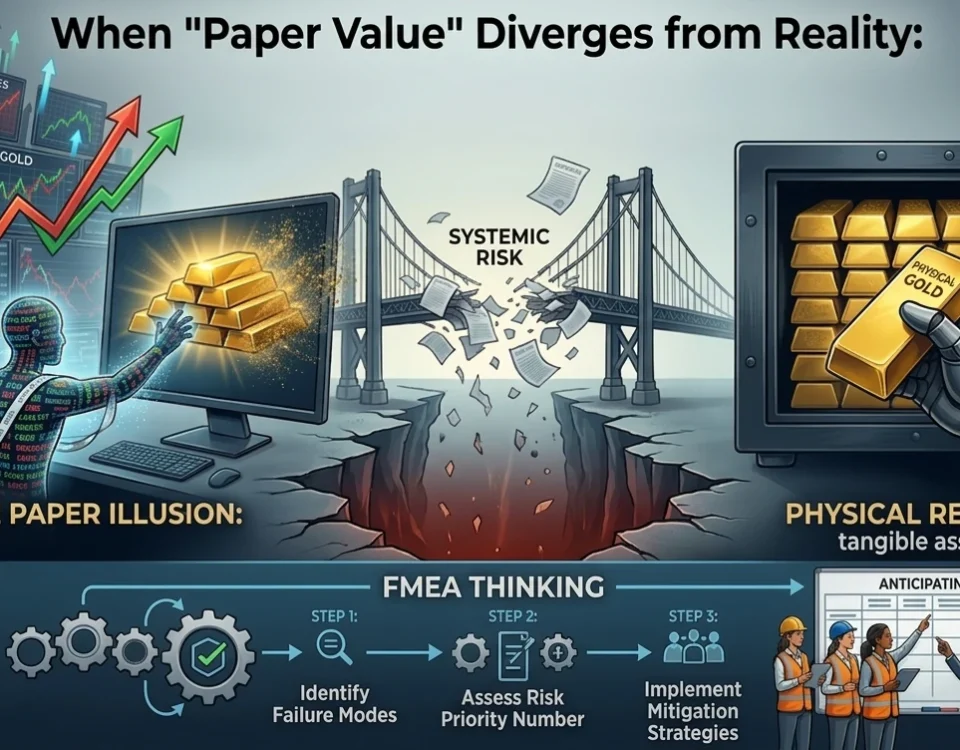

4. Compliance Over Integrity

Some systems become highly compliant on paper but weak in operational reality.

Documents look perfect.

Audits appear successful.

Dashboards remain green.

Meanwhile, actual risks remain unidentified and uncontrolled.

Why Traditional Systems Often Fail to Detect These Risks

Most organizations monitor:

- outputs,

- targets,

- KPIs,

- schedules,

- budgets,

- and performance metrics.

But many fail to monitor:

- assumption quality,

- hidden dependencies,

- behavioural risks,

- escalation barriers,

- ownership clarity,

- and systemic weaknesses.

As complexity increases, these hidden risks compound faster than organizations can react.

This creates fragile systems.

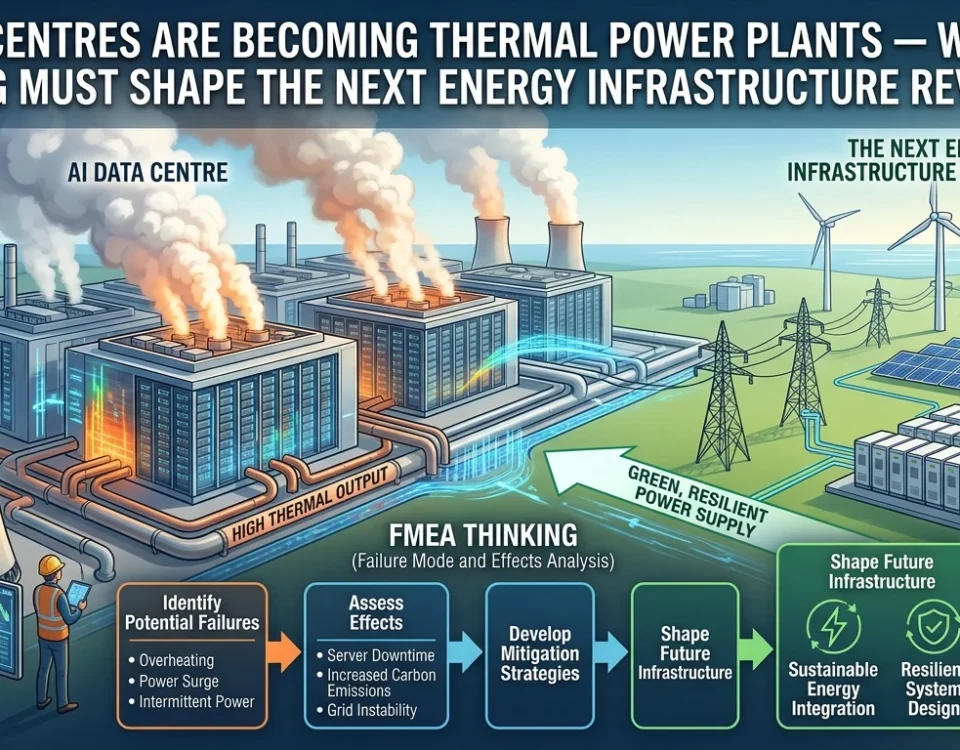

How FMEA Thinking Changes the Approach

Failure Mode and Effects Analysis (FMEA) is often viewed as a technical quality tool.

In reality, its true value is much broader.

At its core, FMEA forces organizations to ask structured preventive questions:

- What can fail?

- Why can it fail?

- What would be the consequences?

- How likely is it?

- Can it be detected early?

- Are existing controls sufficient?

- Who owns the mitigation?

This mindset fundamentally shifts organizations from reactive thinking to proactive risk anticipation.

FMEA Is Not Just for Machines

Many organizations restrict FMEA to:

- manufacturing,

- product design,

- automotive systems,

- or engineering processes.

But modern risks increasingly emerge from interconnected human and organizational systems.

FMEA thinking can—and should—be applied to:

- leadership systems,

- supply chains,

- procurement,

- cybersecurity,

- operational governance,

- project management,

- decision-making structures,

- communication interfaces,

- and organizational culture itself.

Because systems fail through people as much as through technology.

Why Many FMEA Systems Fail in Practice

Ironically, many organizations conduct FMEAs but still experience major failures.

Why?

Because the FMEA process itself becomes compromised.

Common Failure Patterns in FMEA Implementation

Checkbox FMEA

FMEA becomes an audit requirement instead of a living risk process.

Teams complete templates mechanically without genuine analysis.

Lack of Ownership

Risks are identified, but nobody truly owns mitigation responsibility.

Without accountability, controls weaken over time.

Fear of Escalation

Teams avoid assigning high severity ratings to avoid management attention or operational disruption.

This artificially suppresses real risk visibility.

Copy-Paste Thinking

Old FMEAs are reused without reassessing evolving system complexity or changing operating conditions.

Weak Cross-Functional Integration

Engineering, operations, maintenance, procurement, and leadership often operate in silos.

Risks that propagate across interfaces remain undetected.

The Need for Ownership-Driven and Self-Correcting Systems

Strong organizations do not merely create controls.

They create systems that continuously:

- detect weak signals,

- challenge assumptions,

- encourage escalation,

- reassess risks,

- and adapt dynamically.

This is what separates resilient systems from fragile ones.

Characteristics of High-Reliability Organizations

Transparent Risk Escalation

Employees can report concerns without fear.

Clear Accountability

Every major control has visible ownership.

Continuous Learning

Failures and near-misses become learning mechanisms rather than blame events.

Dynamic Risk Reviews

FMEA is continuously updated based on operational feedback, incidents, and evolving complexity.

Cross-Functional Traceability

Decisions remain connected across the entire lifecycle:

design → manufacturing → operations → maintenance → field performance.

Human Behaviour Is Often the Root Cause Multiplier

Technical systems are usually designed with tolerances and safeguards.

But when:

- reporting becomes political,

- integrity weakens,

- leadership avoids criticism,

- and risks are normalized,

then even strong technical systems begin to fail.

The challenge is not merely preventing technical failure.

The challenge is preventing organizations from becoming blind to emerging risks.

FMEA as a Philosophy, Not Just a Tool

The greatest value of FMEA is not the spreadsheet.

It is the mindset.

A mindset that continuously asks:

- What are we missing?

- What assumptions are unchallenged?

- Where are hidden dependencies growing?

- Which controls are weakening?

- Are we detecting weak signals early enough?

- Are people afraid to speak openly?

This transforms FMEA from a compliance activity into a living governance system.

Final Reflection

Human greed, corruption, fear, and poor incentives can absolutely create catastrophic failures.

But such failures rarely emerge overnight.

They grow silently through:

- ignored warnings,

- unmanaged assumptions,

- weak ownership,

- and systems that stop learning.

FMEA thinking cannot eliminate human nature.

But it can create structures that:

- expose hidden risks early,

- strengthen accountability,

- improve traceability,

- encourage transparency,

- and build proactive, self-correcting systems.

In the future, the strongest organizations will not simply be the fastest-growing ones.

They will be the ones that can continuously identify, confront, and control risks before failures become uncontrollable.

Because reliability is ultimately not just about engineering.

It is about culture, integrity, ownership, and the courage to challenge hidden risks before they become disasters.